Technology

How to Take the First Steps in Scaling Your Business

How to Take the First Steps in Scaling Your Business

Most entrepreneurs are hungry to bring their company to the next level.

Whether they operate a family-run business or a rapidly evolving tech startup, there is always another milestone in sight. Business owners want to their companies to make an impact with their customers and communities, and they want to keep honing their craft.

But with 27.9 million small businesses in the United States alone, there is no shortage of competition for the same pieces of the pie.

How can you take steps in scaling your business, and do what your competitors are not willing to do?

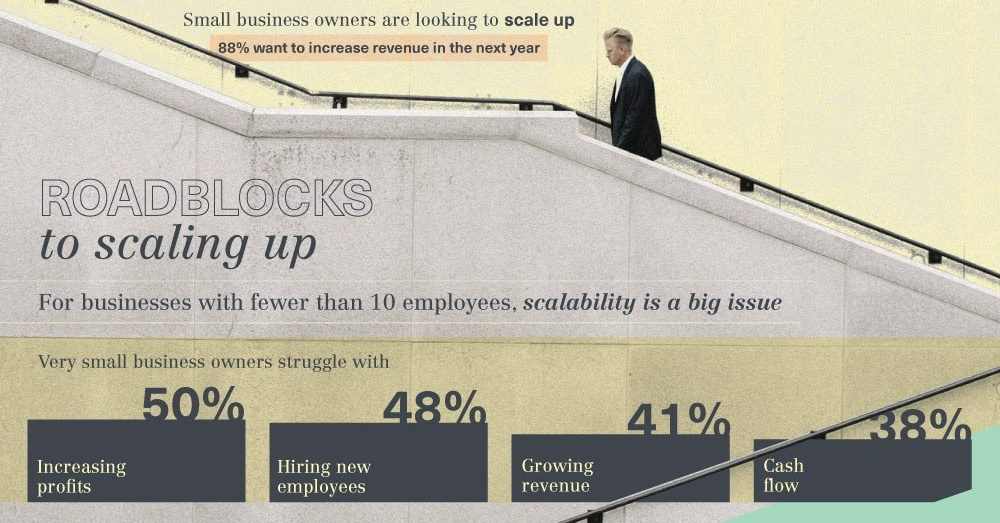

Roadblocks to Scale

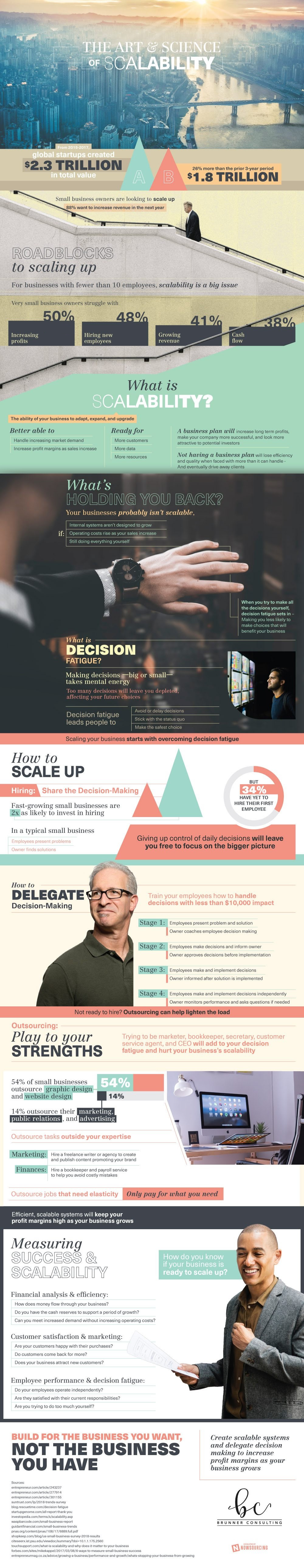

Today’s infographic comes to us from Brunner Consulting, and it breaks down common roadblocks to scaling as well as potential solutions to the problem of decision fatigue.

To begin, we’ll look at a poll of U.S. small business owners, which gives perspective on the challenges most often faced by companies with fewer than 10 employees:

- Profitability (50%)

- Hiring new employees (48%)

- Growing revenue (41%)

- Cash flow (38%)

Unless a business has deep pocketbooks or is venture-backed, there are several obstacles here that may prevent companies from scaling successfully.

A lack of profitability is an obvious limitation, but it’s also clear that revenue growth, cash flow, and adding new employees can be growing pains that may derail any long-term plans.

Decision Fatigue

Why is scaling your business so challenging?

It’s because most types of businesses are not really scalable to begin with. The only sustainable way to scale for most companies is to grow revenue while decreasing operating costs, and for many traditional small businesses (i.e. bakeries, restaurants, hardware stores, consulting, etc.) this can be incredibly difficult.

Even if you come up with a scalable business model, there is yet another obstacle that can prevent your from growing the right way: decision fatigue.

In a growing and evolving company, entrepreneurs can’t do everything – and when they try to make every big and small decision, it affects the quality of those decisions. It can lead to being unnecessarily risk averse, maintaining the status quo, or even avoiding decisions altogether.

Scaling Your Business: First Steps

For a business to grow, there has to be more than one decision-maker.

There are two main routes to this:

1. Delegate Responsibility

In a typical small business, employees find and diagnose problems, while owners focus on solving them. However, by delegating these day-to-day decisions to employees, it frees up owners to work on the big picture items that can fuel growth.

2. Play to Your Strengths

Entrepreneurs can’t do it all, so it’s best to play to your strengths. To do this, outsource business departments that are outside of your wheelhouse. Often those may include things like bookkeeping, marketing, customer service, or website design.

Decentralizing decision-making is one of the first steps in scaling your business – and no matter how you do this, it frees you to focus on the big problems.

Technology

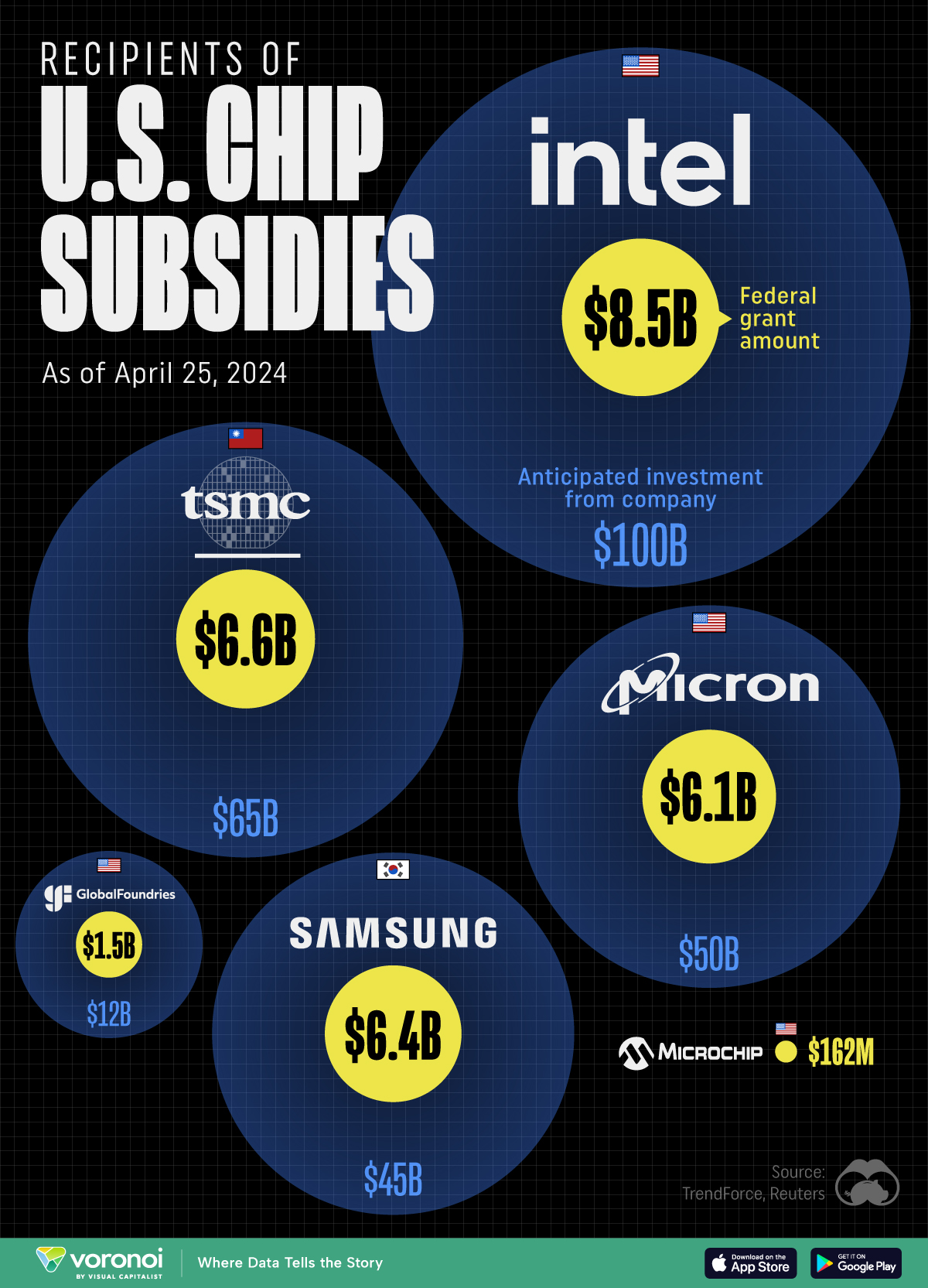

All of the Grants Given by the U.S. CHIPS Act

Intel, TSMC, and more have received billions in subsidies from the U.S. CHIPS Act in 2024.

All of the Grants Given by the U.S. CHIPS Act

This was originally posted on our Voronoi app. Download the app for free on iOS or Android and discover incredible data-driven charts from a variety of trusted sources.

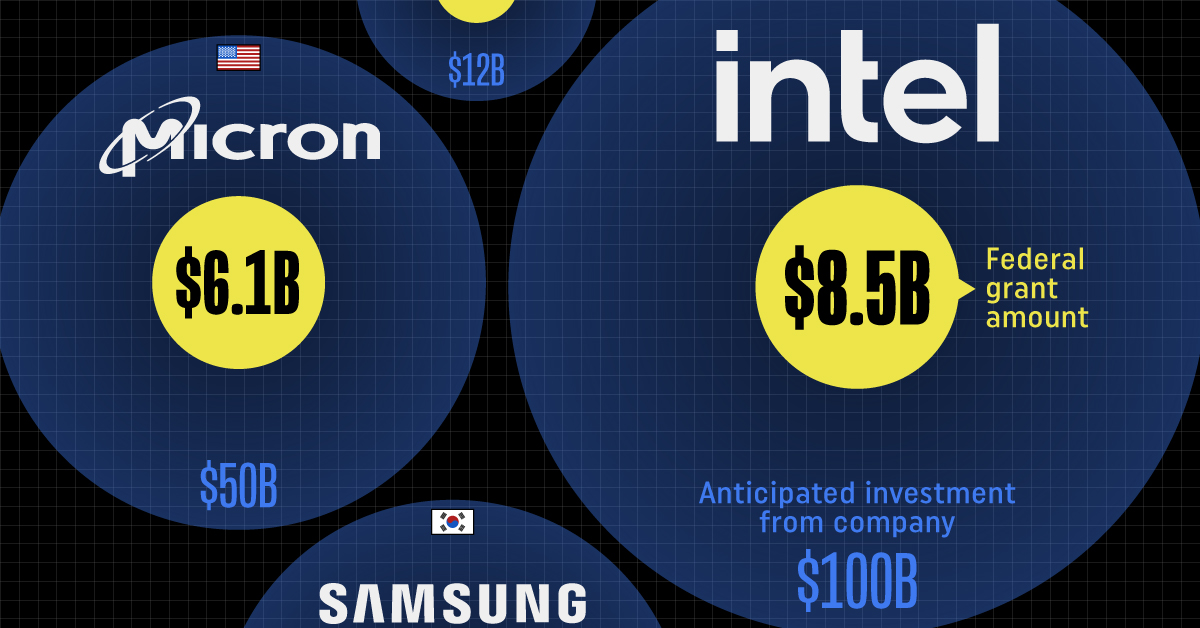

This visualization shows which companies are receiving grants from the U.S. CHIPS Act, as of April 25, 2024. The CHIPS Act is a federal statute signed into law by President Joe Biden that authorizes $280 billion in new funding to boost domestic research and manufacturing of semiconductors.

The grant amounts visualized in this graphic are intended to accelerate the production of semiconductor fabrication plants (fabs) across the United States.

Data and Company Highlights

The figures we used to create this graphic were collected from a variety of public news sources. The Semiconductor Industry Association (SIA) also maintains a tracker for CHIPS Act recipients, though at the time of writing it does not have the latest details for Micron.

| Company | Federal Grant Amount | Anticipated Investment From Company |

|---|---|---|

| 🇺🇸 Intel | $8,500,000,000 | $100,000,000,000 |

| 🇹🇼 TSMC | $6,600,000,000 | $65,000,000,000 |

| 🇰🇷 Samsung | $6,400,000,000 | $45,000,000,000 |

| 🇺🇸 Micron | $6,100,000,000 | $50,000,000,000 |

| 🇺🇸 GlobalFoundries | $1,500,000,000 | $12,000,000,000 |

| 🇺🇸 Microchip | $162,000,000 | N/A |

| 🇬🇧 BAE Systems | $35,000,000 | N/A |

BAE Systems was not included in the graphic due to size limitations

Intel’s Massive Plans

Intel is receiving the largest share of the pie, with $8.5 billion in grants (plus an additional $11 billion in government loans). This grant accounts for 22% of the CHIPS Act’s total subsidies for chip production.

From Intel’s side, the company is expected to invest $100 billion to construct new fabs in Arizona and Ohio, while modernizing and/or expanding existing fabs in Oregon and New Mexico. Intel could also claim another $25 billion in credits through the U.S. Treasury Department’s Investment Tax Credit.

TSMC Expands its U.S. Presence

TSMC, the world’s largest semiconductor foundry company, is receiving a hefty $6.6 billion to construct a new chip plant with three fabs in Arizona. The Taiwanese chipmaker is expected to invest $65 billion into the project.

The plant’s first fab will be up and running in the first half of 2025, leveraging 4 nm (nanometer) technology. According to TrendForce, the other fabs will produce chips on more advanced 3 nm and 2 nm processes.

The Latest Grant Goes to Micron

Micron, the only U.S.-based manufacturer of memory chips, is set to receive $6.1 billion in grants to support its plans of investing $50 billion through 2030. This investment will be used to construct new fabs in Idaho and New York.

-

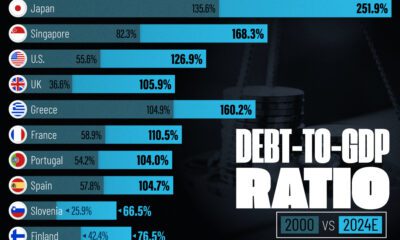

Debt1 week ago

Debt1 week agoHow Debt-to-GDP Ratios Have Changed Since 2000

-

Markets2 weeks ago

Markets2 weeks agoRanked: The World’s Top Flight Routes, by Revenue

-

Countries2 weeks ago

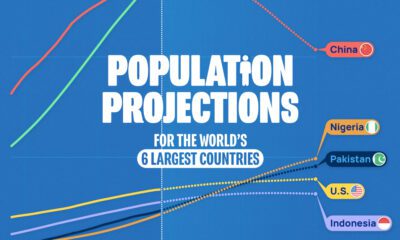

Countries2 weeks agoPopulation Projections: The World’s 6 Largest Countries in 2075

-

Markets2 weeks ago

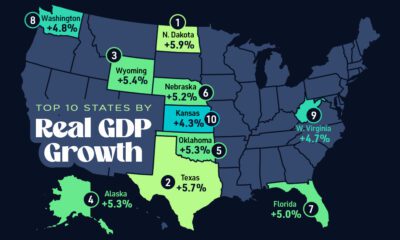

Markets2 weeks agoThe Top 10 States by Real GDP Growth in 2023

-

Demographics2 weeks ago

Demographics2 weeks agoThe Smallest Gender Wage Gaps in OECD Countries

-

United States2 weeks ago

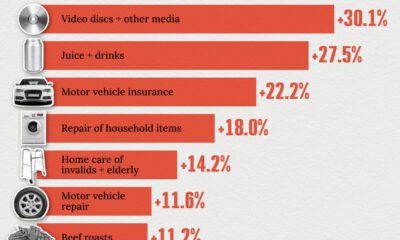

United States2 weeks agoWhere U.S. Inflation Hit the Hardest in March 2024

-

Green2 weeks ago

Green2 weeks agoTop Countries By Forest Growth Since 2001

-

United States2 weeks ago

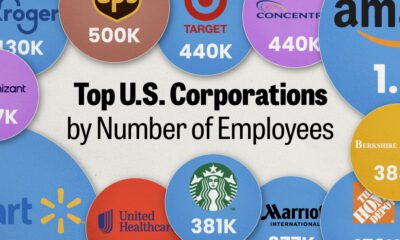

United States2 weeks agoRanked: The Largest U.S. Corporations by Number of Employees