Technology

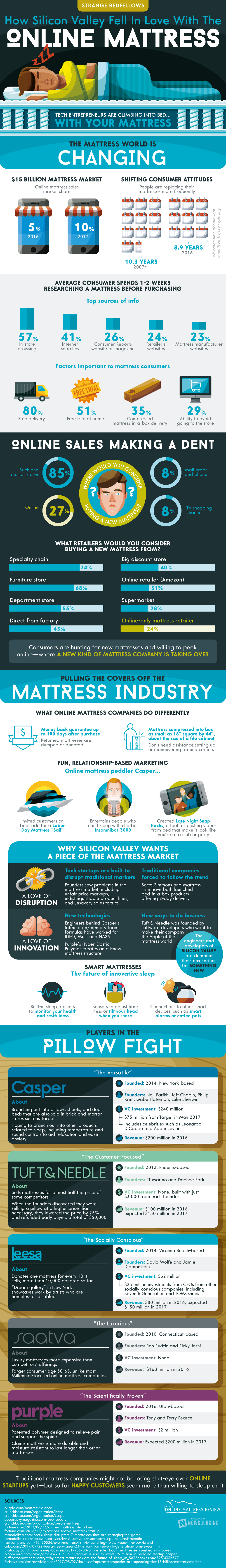

Why Tech is Targeting the $15 Billion Mattress Market

Why Tech is Targeting the $15 Billion Mattress Market

On the surface, the sleep industry appears to be a relatively undesirable space for a startup.

Beds and mattresses are heavy and bulky, and sales are traditionally based on a tactile experience that consumers have with products in physical stores. Holding inventory is expensive and risky, and shipping is a nightmare.

Sure, people are willing to shop online for almost everything these days – but when up to 40% of life is spent lying on a bed, isn’t that a product that should be tested out before a purchase decision is made?

Strange Bedfellows

Despite the conventional wisdom to the contrary, the $15 billion mattress industry has seen the entrance of several ambitious startup companies, and they are starting to put a dent in market share.

Today’s infographic from Online Mattress Review tells the story of how disruption is occurring in this unlikely space – and it all starts with big changes to the business model to make online mattress sales more palatable for both the company and the consumers.

An Updated Model

Here are a few key ways online mattress companies, like Casper or Purple, have changed up their value proposition to customers to make life easier for themselves:

Money-back guarantee

By offering a money-back guarantee of up to 100 days, online mattress companies give customers plenty of time to test their product. This reduces the chance of buyer’s remorse.

Going all-in on memory foam

Memory foam, as well as other mattress types that can be compressed down in size, allow for fast and easy shipping. Consumers can take a box the size of a filing cabinet and easily navigate it around corners and doorframes in a household setting.

Fun, relationship-based marketing

To appeal to the millennial market, Casper has taken on some quirky initiatives, such as creating Insomniabot-3000 (a chatbot for people who can’t sleep), and a Labor Day Mattress “Sail” boat cruise.

Comfortable Growth

In 2016, the market share for online mattress sales was 5%, and it’s expected that the number for 2017 could be at least double that.

While tech startups and the sleep industry may seem like strange bedfellows at first, it’s clear that consumers are embracing the chance to get in bed with the idea.

Technology

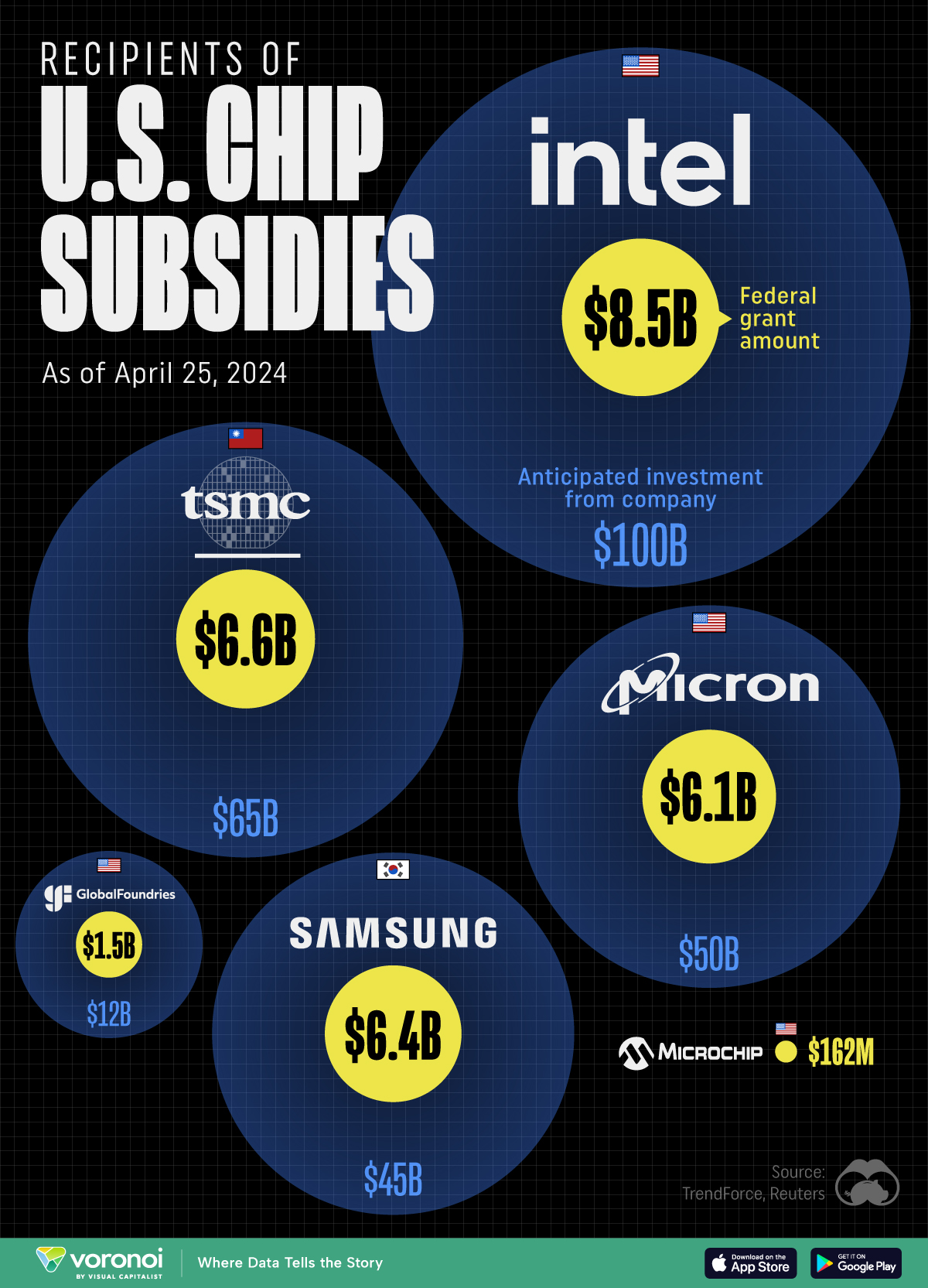

All of the Grants Given by the U.S. CHIPS Act

Intel, TSMC, and more have received billions in subsidies from the U.S. CHIPS Act in 2024.

All of the Grants Given by the U.S. CHIPS Act

This was originally posted on our Voronoi app. Download the app for free on iOS or Android and discover incredible data-driven charts from a variety of trusted sources.

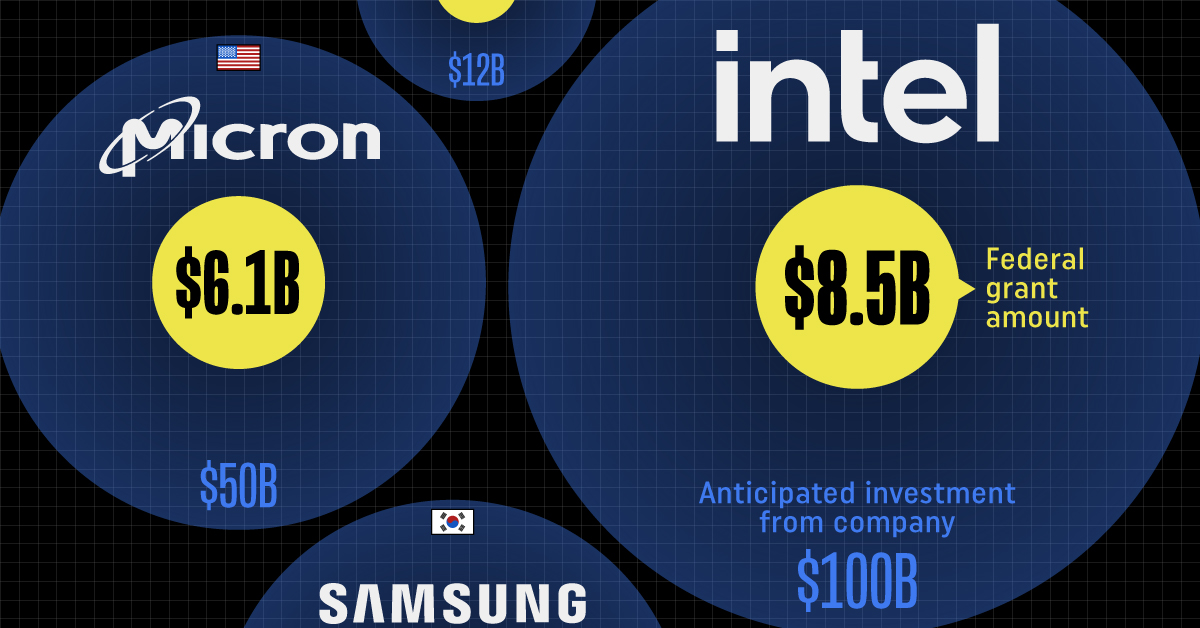

This visualization shows which companies are receiving grants from the U.S. CHIPS Act, as of April 25, 2024. The CHIPS Act is a federal statute signed into law by President Joe Biden that authorizes $280 billion in new funding to boost domestic research and manufacturing of semiconductors.

The grant amounts visualized in this graphic are intended to accelerate the production of semiconductor fabrication plants (fabs) across the United States.

Data and Company Highlights

The figures we used to create this graphic were collected from a variety of public news sources. The Semiconductor Industry Association (SIA) also maintains a tracker for CHIPS Act recipients, though at the time of writing it does not have the latest details for Micron.

| Company | Federal Grant Amount | Anticipated Investment From Company |

|---|---|---|

| 🇺🇸 Intel | $8,500,000,000 | $100,000,000,000 |

| 🇹🇼 TSMC | $6,600,000,000 | $65,000,000,000 |

| 🇰🇷 Samsung | $6,400,000,000 | $45,000,000,000 |

| 🇺🇸 Micron | $6,100,000,000 | $50,000,000,000 |

| 🇺🇸 GlobalFoundries | $1,500,000,000 | $12,000,000,000 |

| 🇺🇸 Microchip | $162,000,000 | N/A |

| 🇬🇧 BAE Systems | $35,000,000 | N/A |

BAE Systems was not included in the graphic due to size limitations

Intel’s Massive Plans

Intel is receiving the largest share of the pie, with $8.5 billion in grants (plus an additional $11 billion in government loans). This grant accounts for 22% of the CHIPS Act’s total subsidies for chip production.

From Intel’s side, the company is expected to invest $100 billion to construct new fabs in Arizona and Ohio, while modernizing and/or expanding existing fabs in Oregon and New Mexico. Intel could also claim another $25 billion in credits through the U.S. Treasury Department’s Investment Tax Credit.

TSMC Expands its U.S. Presence

TSMC, the world’s largest semiconductor foundry company, is receiving a hefty $6.6 billion to construct a new chip plant with three fabs in Arizona. The Taiwanese chipmaker is expected to invest $65 billion into the project.

The plant’s first fab will be up and running in the first half of 2025, leveraging 4 nm (nanometer) technology. According to TrendForce, the other fabs will produce chips on more advanced 3 nm and 2 nm processes.

The Latest Grant Goes to Micron

Micron, the only U.S.-based manufacturer of memory chips, is set to receive $6.1 billion in grants to support its plans of investing $50 billion through 2030. This investment will be used to construct new fabs in Idaho and New York.

-

Energy1 week ago

Energy1 week agoThe World’s Biggest Nuclear Energy Producers

-

Money2 weeks ago

Money2 weeks agoWhich States Have the Highest Minimum Wage in America?

-

Technology2 weeks ago

Technology2 weeks agoRanked: Semiconductor Companies by Industry Revenue Share

-

Markets2 weeks ago

Markets2 weeks agoRanked: The World’s Top Flight Routes, by Revenue

-

Countries2 weeks ago

Countries2 weeks agoPopulation Projections: The World’s 6 Largest Countries in 2075

-

Markets2 weeks ago

Markets2 weeks agoThe Top 10 States by Real GDP Growth in 2023

-

Demographics2 weeks ago

Demographics2 weeks agoThe Smallest Gender Wage Gaps in OECD Countries

-

United States2 weeks ago

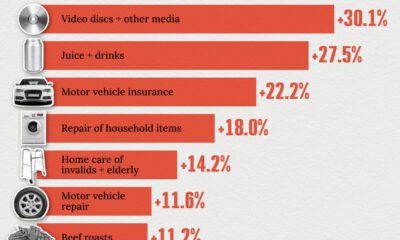

United States2 weeks agoWhere U.S. Inflation Hit the Hardest in March 2024