Technology

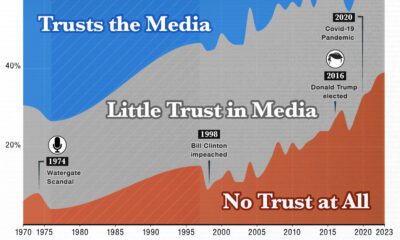

Here’s 13 Ideas to Fight Fake News – and a Big Problem With All of Them

Will humans or computer algorithms be the future arbiters of “truth”?

Today’s infographic from Futurism sums up the ideas that academics, technologists, and other experts are proposing that we implement to stop the spread of fake news.

Below the infographic, we raise our concerns about these methods.

While fake news is certainly problematic, the solutions proposed to penalize articles deemed to be “untrue” are just as scary.

By centralizing fact checking, a system is created that is inherently fragile, biased, and prone for abuse. Furthermore, the idea of axing websites that are deemed to be “untrue” is an initiative that limits independent thought and discourse, while allowing legacy media to remain entrenched.

Centralizing “Truth”

It could be argued that the best thing about the internet is that it decentralizes content, allowing for any individual, blog, or independent media company to stimulate discussion and new ideas with low barriers to entry. Millions of new entrants have changed the media landscape, and it has left traditional media flailing to find ways to adjust revenue models while keeping their influence intact.

If we say that “truth” can only be verified by a centralized mechanism – a group of people, or an algorithm written by a group of people – we are welcoming the idea that arbitrary sources will be preferred, while others will not (unless they conform to certain standards).

Based on this mechanism, it is almost certain that well-established journalistic sources like The New York Times or The Washington Post will be the most trusted. By the same token, newer sources (like independent media, or blogs written by emerging thought leaders) will not be able to get traction unless they are referencing or receiving backing from these “trusted” gatekeepers.

The Impact?

This centralization is problematic – and here’s a step-by-step reasoning of why that is the case:

First, either method (human or computer) must rely on preconceived notions of what is “authoritative” and “true” to make decisions. Both will be biased in some way. Humans will lean towards a particular consensus or viewpoint, while computers must rank authority based on different factors (Pagerank, backlinks, source recognition, or headline/content analysis).

Next, there is a snowball effect involved: if only posts referencing these authoritative sources of “truth” can get traction on social media, then these sources become even more authoritative over time. This creates entrenchment that will be difficult to overcome, and new bloggers or media outlets will only be able to move up the ladder by associating their posts with an existing consensus. Grassroot movements and new ideas will suffer – especially those that conflict with mainstream beliefs, government, or corporate power.

Finally, this raises concerns about who fact checks the fact checkers. Forbes has a great post on this, showing that Snopes.com (a fact checker) could not even verify basic truths about its own operations.

Removing articles deemed to be “untrue” is a form of censorship. While it may help to remove many ridiculous articles from people’s social feeds, it will also impact the qualities of the internet that make it so great in the first place: its decentralized nature, and the ability for any one person to make a profound impact on the world.

Technology

Ranked: Semiconductor Companies by Industry Revenue Share

Nvidia is coming for Intel’s crown. Samsung is losing ground. AI is transforming the space. We break down revenue for semiconductor companies.

Semiconductor Companies by Industry Revenue Share

This was originally posted on our Voronoi app. Download the app for free on Apple or Android and discover incredible data-driven charts from a variety of trusted sources.

Did you know that some computer chips are now retailing for the price of a new BMW?

As computers invade nearly every sphere of life, so too have the chips that power them, raising the revenues of the businesses dedicated to designing them.

But how did various chipmakers measure against each other last year?

We rank the biggest semiconductor companies by their percentage share of the industry’s revenues in 2023, using data from Omdia research.

Which Chip Company Made the Most Money in 2023?

Market leader and industry-defining veteran Intel still holds the crown for the most revenue in the sector, crossing $50 billion in 2023, or 10% of the broader industry’s topline.

All is not well at Intel, however, with the company’s stock price down over 20% year-to-date after it revealed billion-dollar losses in its foundry business.

| Rank | Company | 2023 Revenue | % of Industry Revenue |

|---|---|---|---|

| 1 | Intel | $51B | 9.4% |

| 2 | NVIDIA | $49B | 9.0% |

| 3 | Samsung Electronics | $44B | 8.1% |

| 4 | Qualcomm | $31B | 5.7% |

| 5 | Broadcom | $28B | 5.2% |

| 6 | SK Hynix | $24B | 4.4% |

| 7 | AMD | $22B | 4.1% |

| 8 | Apple | $19B | 3.4% |

| 9 | Infineon Tech | $17B | 3.2% |

| 10 | STMicroelectronics | $17B | 3.2% |

| 11 | Texas Instruments | $17B | 3.1% |

| 12 | Micron Technology | $16B | 2.9% |

| 13 | MediaTek | $14B | 2.6% |

| 14 | NXP | $13B | 2.4% |

| 15 | Analog Devices | $12B | 2.2% |

| 16 | Renesas Electronics Corporation | $11B | 1.9% |

| 17 | Sony Semiconductor Solutions Corporation | $10B | 1.9% |

| 18 | Microchip Technology | $8B | 1.5% |

| 19 | Onsemi | $8B | 1.4% |

| 20 | KIOXIA Corporation | $7B | 1.3% |

| N/A | Others | $126B | 23.2% |

| N/A | Total | $545B | 100% |

Note: Figures are rounded. Totals and percentages may not sum to 100.

Meanwhile, Nvidia is very close to overtaking Intel, after declaring $49 billion of topline revenue for 2023. This is more than double its 2022 revenue ($21 billion), increasing its share of industry revenues to 9%.

Nvidia’s meteoric rise has gotten a huge thumbs-up from investors. It became a trillion dollar stock last year, and broke the single-day gain record for market capitalization this year.

Other chipmakers haven’t been as successful. Out of the top 20 semiconductor companies by revenue, 12 did not match their 2022 revenues, including big names like Intel, Samsung, and AMD.

The Many Different Types of Chipmakers

All of these companies may belong to the same industry, but they don’t focus on the same niche.

According to Investopedia, there are four major types of chips, depending on their functionality: microprocessors, memory chips, standard chips, and complex systems on a chip.

Nvidia’s core business was once GPUs for computers (graphics processing units), but in recent years this has drastically shifted towards microprocessors for analytics and AI.

These specialized chips seem to be where the majority of growth is occurring within the sector. For example, companies that are largely in the memory segment—Samsung, SK Hynix, and Micron Technology—saw peak revenues in the mid-2010s.

-

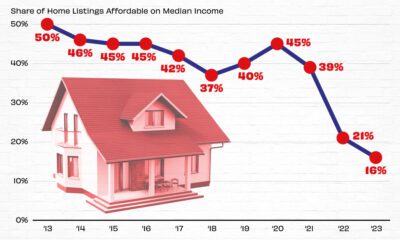

Real Estate2 weeks ago

Real Estate2 weeks agoVisualizing America’s Shortage of Affordable Homes

-

Technology1 week ago

Technology1 week agoRanked: Semiconductor Companies by Industry Revenue Share

-

Money2 weeks ago

Money2 weeks agoWhich States Have the Highest Minimum Wage in America?

-

Real Estate2 weeks ago

Real Estate2 weeks agoRanked: The Most Valuable Housing Markets in America

-

Business2 weeks ago

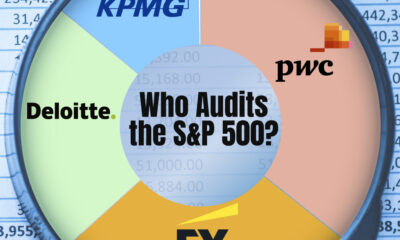

Business2 weeks agoCharted: Big Four Market Share by S&P 500 Audits

-

AI2 weeks ago

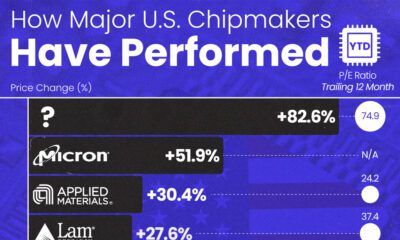

AI2 weeks agoThe Stock Performance of U.S. Chipmakers So Far in 2024

-

Misc2 weeks ago

Misc2 weeks agoAlmost Every EV Stock is Down After Q1 2024

-

Money2 weeks ago

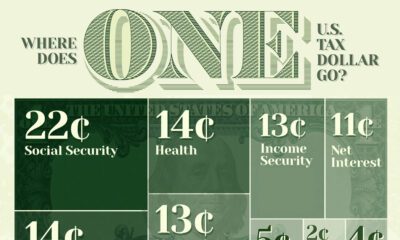

Money2 weeks agoWhere Does One U.S. Tax Dollar Go?